— C. A. R. Hoare

It seems to me that modern computers trap people in a vicious cycle. Compatibility guarantees breed complexity over time as the world changes. Complexity is managed by introducing layers of abstraction. Abstractions introduce new compatibility guarantees. Over the decades this vicious cycle leads to even professional programmers understanding only a tiny fraction of the software infrastructure that runs their computers. As a result, our world is increasingly captured by software that is unaccountable to people.

For several years now I've had a vision for a computer that allows anyone to audit its inner workings, where any operation can be decomposed strictly into a parsimonious combination of simpler operations, terminating without cyclic dependencies or circular reasoning at some ground level. Ideally it would do this in a way that rewards curiosity, leading to a virtuous cycle where an order of magnitude more people grow to understand how their computer works as they use it.

Nowhere in this picture are compatibility guarantees, version numbers or forced upgrades. At any point your computer should be internally consistent and free of known historical accidents. Even if this means upgrades are more work and so more infrequent, and that our computers must be slower. Or do less. That seems like a worthwhile trade for a more sustainable world.

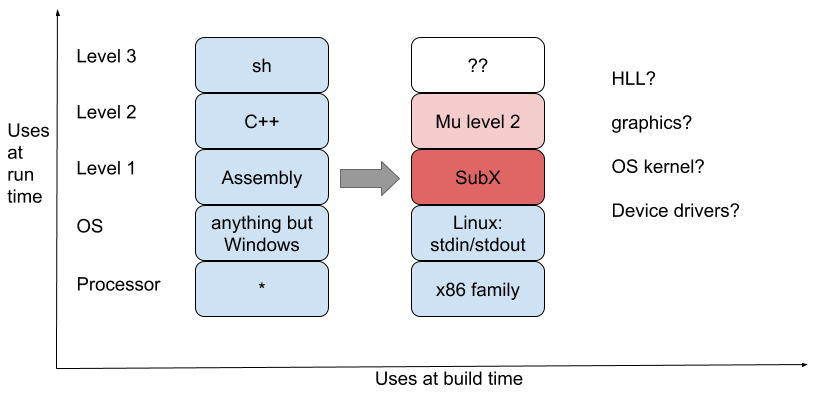

At the start of 2020 the state of the Mu computer looked like this:

The blue stack on the left was my current computer, without changes. The stack on the right was a better computer using some existing pieces (blue) and some new pieces (red). The arrow between the stacks was like a wormhole between worlds. I was using the existing world to build the new world, but the idea was for the new world to be self-sufficient once it was set up.

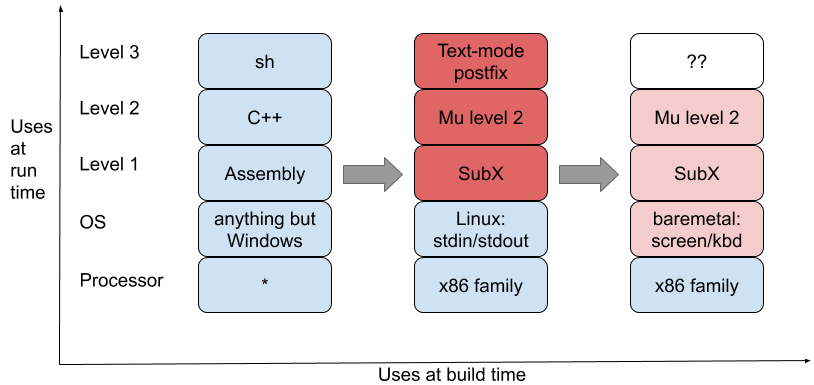

At the end of 2020, more details of the picture are becoming clear:

I now have a self-sufficient computer in the middle stack that can

rebuild itself

without needing much mainstream software. Since its focus is on building

itself, the currency of the realm is streams of text. An existing Linux kernel

provides clean primitives for operating on streams of text over stdin/stdout.

Beyond that the Mu computer fends for itself. It even has a shell, though it

doesn't look anything like Unix has taught us to expect:

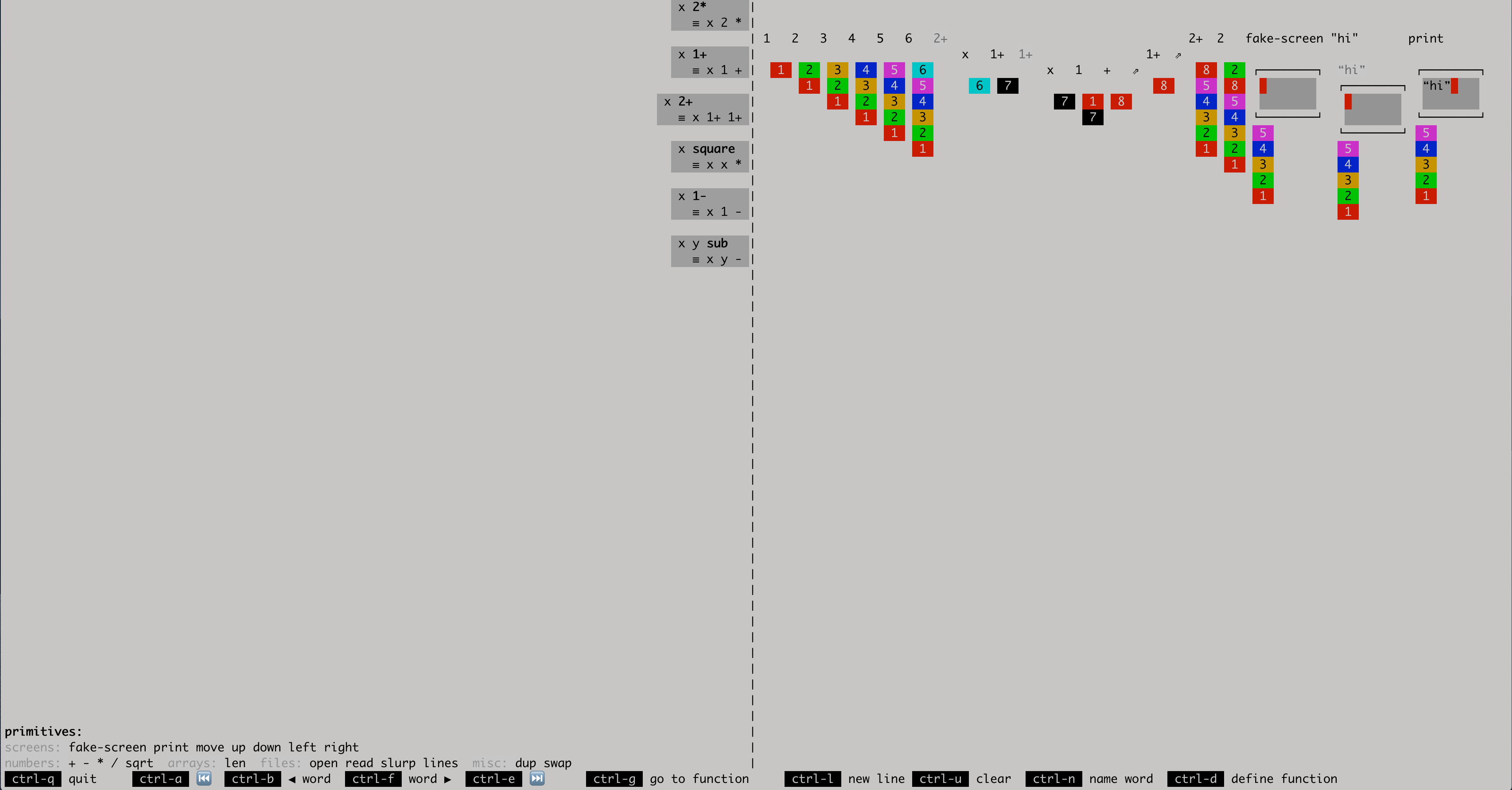

It's a live-updating postfix environment entirely in text mode that shows the top-level evolution of your computation (including side-effects in little fake screens) at a glance, where you can drill down into any function call when you need more details. Check out the 15 two-minute demos I made in 2020.

I don't represent size and complexity in my pictures above. The mainstream stack running on our computers contains hundreds of millions of lines of code, and would look like a ball of spaghetti if we zoomed into the connections between levels. The middle stack requires twelve million lines for the Linux kernel, but aside from that weighs in at 50k lines of straight-line dependencies, most of them mapping to individual instructions of machine code, two thirds of which are comments or automated tests. (It does a lot less, of course. The goal is to start with a sustainable stack and then preserve sustainability properties as we thoughtfully add functionality.) Finally, the final and most nascent stack on the right gets rid of the twelve million lines of Linux, and can do even less at the moment. All it can do is draw pixels on the screen and process keystrokes.

- No wifi, no networking.

- No file system yet, just sectors on a local disk.

- No multitouch, no touchscreen, no mouse, not even any shift key support yet.

- No graphics acceleration, no fonts, no way to print text.

- No virtual memory, no GC, not even any memory reclamation yet.

But it's a start. A moderately sized screen, a keyboard, gigabytes of RAM and a guarantee of memory-safety. Let's see where 2021 leads.

(Initial revision on Mastodon.)

Comments gratefully appreciated. Please send them to me by any method of your choice and I'll include them here.