My goal for Mu is software that is accountable to the people it affects. But it's been difficult to talk to people about Mu's goals because of the sheer number of projects that use similar words but lead to very different priorities and actions. Some of these I like to be associated with, some not so much.

if you care about making software accountable

There are two ways to describe the provenance of software: in terms of the people who made it or checked it out, and in terms of its internals. The two ways are often described with similar-sounding words.

On one hand, trust chaining is a way to transform trust in one person into trust in another.

Trust chaining is also used to transform trust in people into trust in artifacts. Performing arithmetic on an artifact to verify a trust relationship such as "person X owns this signature".

On the other hand, proofs convey an argument to a reader and let the reader assess how objective and ironclad it is without needing to trust the author.

Automated proofs can be checked by a computer, but are still amenable to human readers. (Beyond automated proofs, zero-knowledge proofs are more about verification. Conveying ownership of artifacts rather than objectivity of knowledge. That way lie ideas like proof of work. I want to exclude these from my preferred notion of 'proof'. Let me know if you can think of a clearer word than 'proof' for my desired category.)

Trusted computing uses trust chaining to shackle a software stack to some distant server running some arbitrary software. (more details)

Reproducible builds are about getting some arbitrary set of software to generate deterministic output given identical input. The build recipe acts as a proof that a binary was generated from it.

Bootstrappable builds are about making all the software needed for a program available for auditing. Including all the software needed to build it, and all the software needed to build that, and so on. The build recipe acts as a proof that source code for the entire supply chain is available for auditing.

(Bootstrapping is about getting a compiler to build its own source code. Such a similar term, so anthithetical to bootstrappable builds.)

Collaborative code review is about getting people to sign off on software packages once source code is available. There's no proof here, but it becomes possible to verify that some people inspected sources and found no major issues.

Problems in this category:

- people make mistakes; I want to verify not just what people think, but why

- stacks currently grow complex faster than attempts to make them auditable

- auditability doesn't help answer people's questions about why a piece of software does something seemingly questionable

Even so:

- some auditability is a vast improvement over none

- auditability is vastly more important for the lowest levels of the stack, where it's more tractable to obtain

if you care about bringing software closer to the people it affects

Minimalism is about building simple programs with as little code as possible, starting from some arbitrary set of software. (example example example)

Malleable software or end-user programming is about building programs to be as easy to change as they are to use. Building atop some arbitrary set of software.

Problems in this category:

- things (example) are often not minimal or simple if you get into details (example)

- to permit open-ended changes you have to take control of more and more of the stack (example)

- minimal software has a constant temptation to grow less minimal over time (example)

Even so:

- they can deliver a great deal of capability even if they're not perfect

editorializing

I care about software that is accountable to the people it affects. Both sides matter.

The word 'trust' provides cover for a large amount of bad behavior. A trusted platform module provides trust to large companies about the hardware their "intellectual property" runs on. It does not provide trust to the individual people whose computers it inhabits.

Proofs work better than some cryptographic sign-off. If a trust relationship is found to have a problem, it must be revoked wholesale. Repeated revocations reduce confidence. If a proof is found to have a problem, it's usually easy to patch. There are enough details to determine if things are improving.

Trust relationships can change. Habits can be hard to change. That contrast implies that the result of an audit cannot be binary. It is untenable to tell people to stop using software that grows abusive. When software does something a person dislikes, they should be able to 1. find the sources, 2. build the sources, 3. modify the sources at any level of granularity, and 4. feel confident in the results of their actions.

It's important that this be an incremental process. Minor tweaks in response to minor dissatisfactions aren't just first-world problems. Going through all four steps for something minor creates confidence that one will be prepared when major changes are needed.

Size matters. It can take surprisingly little code to lose this property of incremental accountability.

The number of zones of ownership matters. You can make incrementally accountable software by relying on others, but not too many others. Minimizing the dependency tree may well be more important than minimizing lines of code.

Mu is reproducible, auditable and almost entirely incrementally accountable (property 3. above hasn't been stress-tested much and so remains a work in progress). I flatter myself that it's difficult to ask "why" questions in the form of code changes without triggering a failing test or other error message that answers them.

However, Mu too has problems:

- I have to do without many things at the moment: network support, concurrency, files, pointing device, performance, etc., etc.

- it's unclear if this way leads to any program anybody else would find useful enough to want to modify

Even so:

- if it's hard to create incrementally accountable software, perhaps we shouldn't be relying on software so much

Conclusion

It's worth thinking about different pieces of software in terms of what you give up when using them. Some points of comparison:

- Most software: Memory safety (either directly or in build dependencies), reproducible builds, auditable builds, incremental accountability.

- The suckless school: Memory safety, reproducible builds, auditable builds, incremental accountability.

- Rust: reproducible builds, auditable builds, incremental accountability.

- bootstrappable: Memory safety, incremental accountability.

- Uxn: Memory safety, large screen, lots of RAM, reproducible builds. (Incremental accountability seems less important when programs are so tiny.)

- Mu on Linux: Graphics, some memory safety and incremental accountability (Linux dependency), portability

- Mu: Networking, concurrency, files, performance, portability.

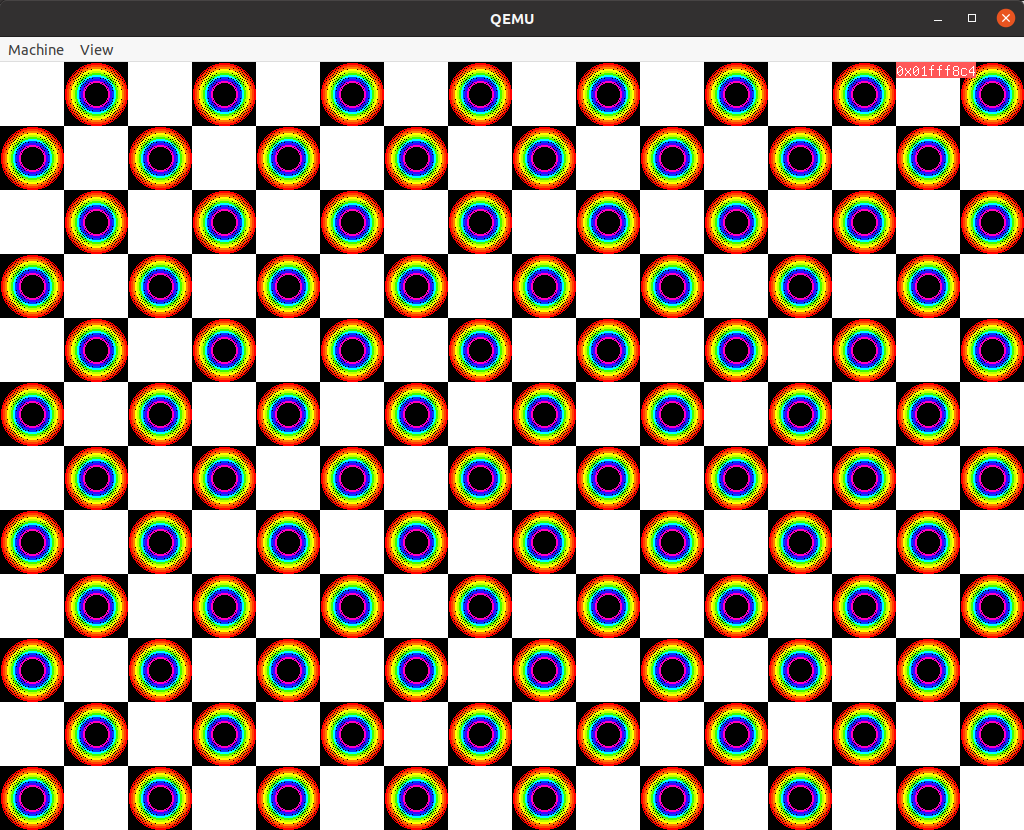

Thank you for reading. Here's a screenshot of a Mu program I made for my kids today: "chessboard with rainbows".

(This post was inspired by Ribbonfarm's

periodic maps.)

comments

Comments gratefully appreciated. Please send them to me

by any method of your choice and I'll include them

here.

This is a little different from auditable. Eg, I would prefer a webcam hardwired with a light to show when it is powered on vs a webcam where I could inspect it to determine the status, but that requires my curiosity and effort.

The distinction you make is also important. A device can only have a limited number of lights and dials, and I don't have much to contribute regarding what to use them for. It feels like a plenty hard problem just to provide all the data somewhere so that others can decide what to highlight.

Is that comment by you? I just want to clarify that that post is not written by me. It's a long quote from an essay by Richard Gabriel.

Yes.

> /post/habitability is [...] a long quote from an essay by Richard Gabriel

Yes, but it's a nice descriptor.

Or maybe we should be bold enough to synthesize our own word. "hactile software"? (*hack* + *tactile* = *hactile*; something that exhibits the quality is said to have *hactility*) Too corny?

> Trading off notational convenience for tests may seem regressive, but I suspect high-level languages aren't particularly helpful in understanding large codebases. No matter how good a notation is, it can only let you see a tiny fraction of a large program at a time. Logs, on the other hand, can let you zoom out and take in an entire *run* at a glance, making them a superior unit of comprehension. If I'm right, it makes sense to prioritize the right *tactile* interface for working with and getting feedback on large programs before we invest in the *visual* tools for making them concise.

(From November 2014 to March 2016)

In the process, I was also reminded of another fount of neighbors on the habitability side: the literature on tailorable software in the 90's, as described in chapter 2 of Philip Tchernavskij's thesis.